Google BigQuery is a cloud-based enterprise data warehouse that offers fast SQL queries and interactive analysis of very large datasets. BigQuery is built on Google's Dremel technology and built to handle read-only data.

In addition to Google Analytics reports, BigQuery enables querying, processing, loading, exporting and data visualization of big data.

The platform uses a columnar storage model that enables much faster data browsing, as well as a tree architecture model that makes querying and aggregating results significantly easier and more efficient. Additionally, BigQuery is serverless and designed to be highly scalable thanks to its fast deployment cycle and on-demand pricing.

Data in BigQuery is automatically encrypted at rest or in transit.

Google BigQuery Data Warehouse Architecture

BigQuery is based on Dremel technology. Dremel has been a tool at Google for about 10 years.

Dremel: It dynamically allocates sockets to queries as needed and distributes them among multiple users querying at the same time. A single user can have thousands of sockets to run their queries. It takes more than a lot of hardware to keep your queries running fast. BigQuery requests are powered by the Dremel query engine.

Colossus: BigQuery relies on Colossus, Google's latest generation distributed file system. Every Google datacenter has its own Colossus cluster, and each Colossus cluster has enough disks to give every BigQuery user thousands of private disks at once. Colossus also handles replication, recovery (when disks fail), and distributed management.

Jupiter Network: It is the internal data center network that allows BigQuery to separate storage and computation.

Google BigQuery Setup

Thanks to the platform's expanded data capabilities – built to manage large query petabyte-scale analytics – it also means it can collect more data from different sources and organize it faster.

Also, combining BigQuery's machine learning capabilities with existing datasets and structures can improve storage design, streamline querying and data scanning, and even lower costs by eliminating redundant structures and optimizing storage for individual organization's usage patterns.

BigQuery is part of Google Cloud Platform and integrates with other GCP services and tools. BigQuery; Cloud Storage can process data stored in other GCP products, including the Cloud SQL relational database service, the Cloud Bigtable NoSQL database, Google Drive, and Google's distributed database Spanner.

You don't have to worry about the size of the storage or how much RAM is required to process your query or the number of processors on your server. The system automatically scales to run your queries and shuts down when complete. Google publishes sample databases for you to practice on.

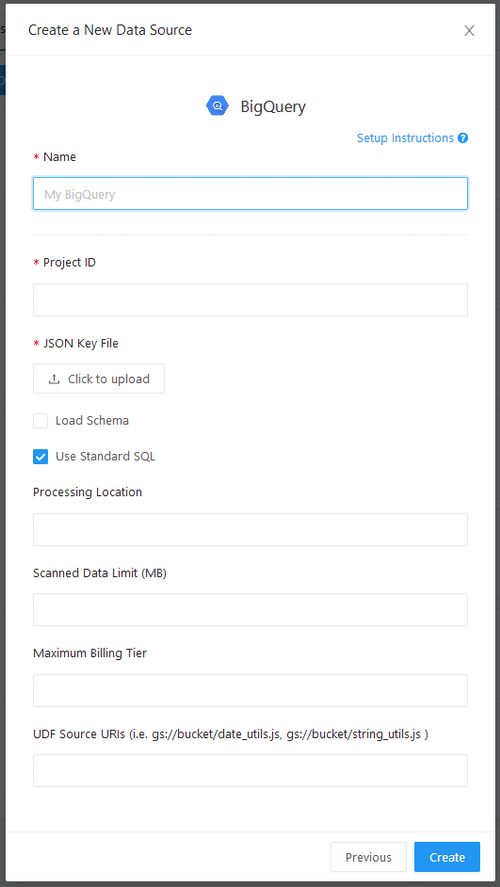

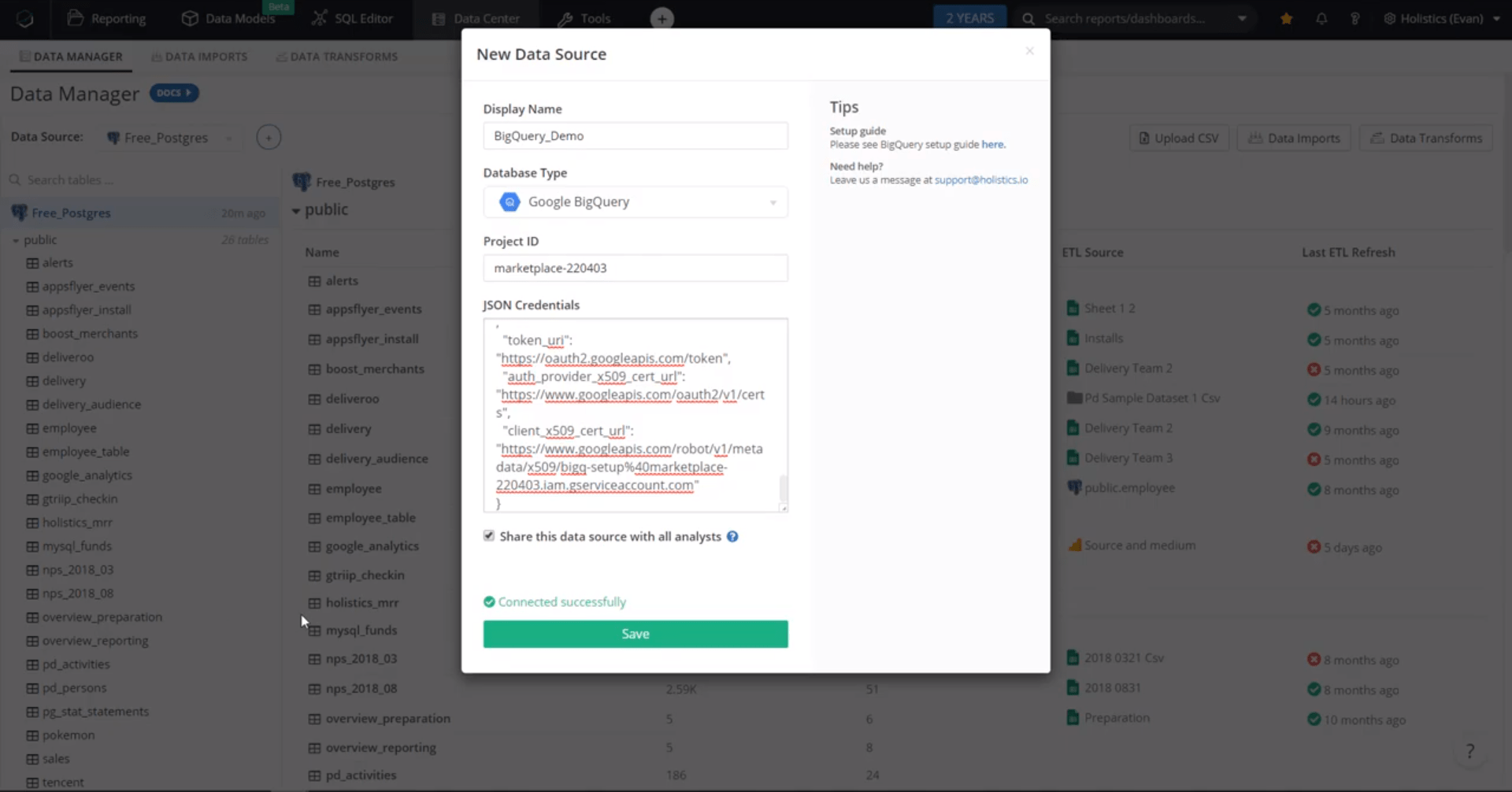

In the BigQuery datasource setup screen, the project ID and JSON key file are always required. You can get a key file when you create a new service account with Google.

BigQuery 2.0 and later supports either legacy SQL syntax or standard SQL syntax. Redash supports both, but standard SQL is the default. This preference applies at the data source level by toggling the 'use standard SQL' box. Your selection here is passed to BigQuery along with your query text. If some of your queries use legacy SQL and others use standard SQL, you can create two data sources.

If you get a job not found error similar to: Not found: Job : check if your rendering location is correct.

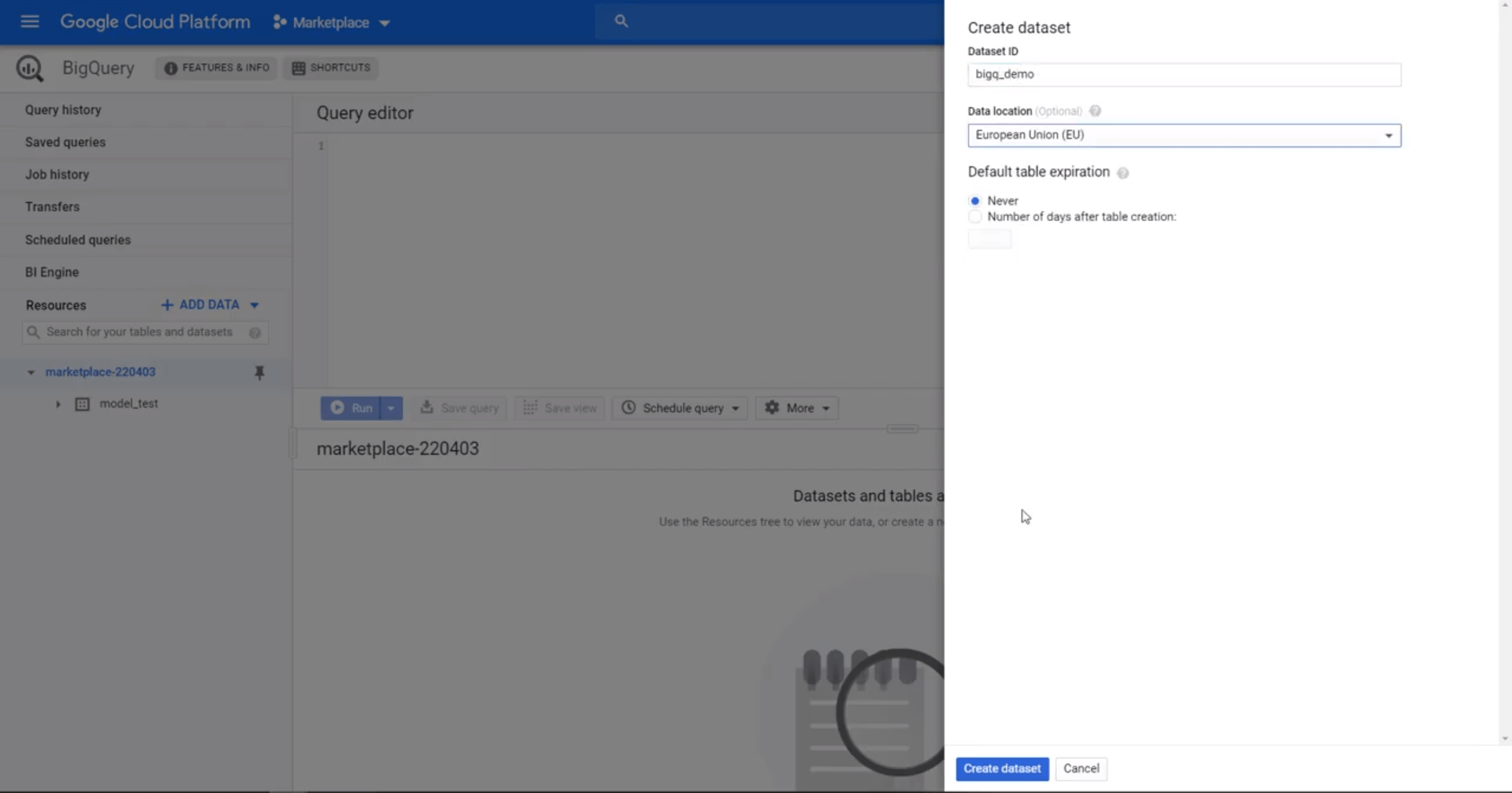

First, set up your Google Cloud Console and sign in. Select or create a project, go to BigQuery from your side menu and create a new dataset. A dataset acts as a 'folder' for your data tables that you want to load.

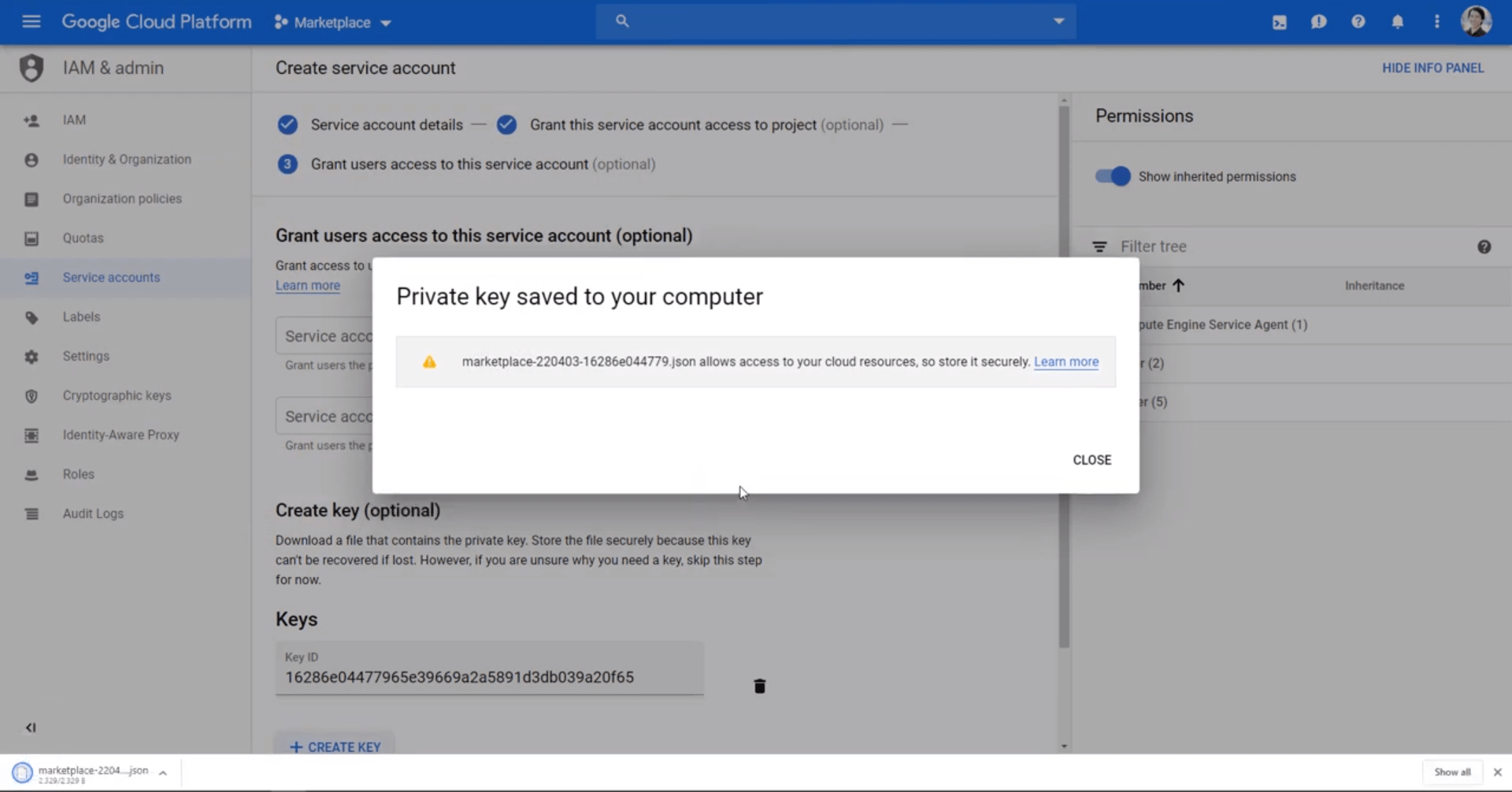

Under the IAM and admin menu, create a new service account with BigQuery access to manage your data and generate a JSON key to connect BigQuery to Holistics.

Save this JSON file for later. Remember to grant this account sufficient BigQuery role privileges, such as BigQuery Admin permissions.

Save your JSON file from your new service account to connect BigQuery later.

Now, to add a new datasource to Holistics, select BigQuery from the drop-down menu, copy your Google project ID value from your Google console, paste the JSON key, then test and save your BigQuery datasource.

Your BigQuery data warehouse is now connected and ready!

You can now start migrating data to BigQuery for your analytics.