Now that we've discussed the differences between indexing and crawling and the impact of JavaScript on SEO, we'll now cover best practices for JavaScript SEO.

5 Second Timeout

Although Google is not officially stated, it is known that Google should not wait more than 5 seconds. Therefore, any content (about 5 seconds) in the upload event can be indexed.

Indexable URLs

Pages require indexable URLs with server-side support for each landing page. This includes every category, subcategory, and product page.

Using Your Browser's "Review" Feature

Once the rendered HTML is available and meets the level of a traditional landing page that Google expects, many of the influencing factors will resolve on their own.

To review rendered HTML and general JavaScript elements, Google Chrome's “Inspect Element” can be used to help discover more information about the web page that is hidden from users' view. To discover hidden JavaScript files, such as user behavior when interacting with a web page, you can obtain this information from the "Resources" tab of the "Review Item".

If you can't see all of your content in the review element, chances are you're using JavaScript, known as client-side rendering, to render in-browser.

URL Inspection Tool in GSC

The URL inspection tool allows you to analyze a specific URL on your website to understand the exact status of how Google viewed it. The URL inspection tool provides more information than Google's index, such as crawling, indexing, and structured data errors causing problems.

Increase Page Loading Speed

Google stated that page speed is one of the signals used by its complex algorithms to rank pages, and a faster page speed allows search engine bots to increase the number of pages a site helps in overall indexing. In terms of JavaScript, making the web page more interactive and dynamic for users can incur some costs related to page speed. To mitigate this, it may be advisable to use late loading for certain components that are not entirely necessary, usually at the top of the screen.

Be Persistent in Your On-Page SEO Efforts

All the on-page SEO rules that go into optimizing your page to help them rank in search engines still apply. Optimize your title tags, meta descriptions, alt attributes on images, and meta robot tags. Unique and descriptive titles and meta descriptions help users and search engines easily identify the content. Pay attention to search intent and strategic placement of semantically relevant keywords.

It's also good to have an SEO friendly URL structure. In a few cases, websites implement a pushState change to the URL, confusing Google trying to find the standard one. Be sure to check the URLs for such issues.

Make Sure Your JavaScript Appears in the DOM Tree

JavaScript rendering works when a page's DOM is sufficiently loaded. The DOM, or Document Object Model, represents the structure of the page content and the relationship of each element to the other. You can find it in the "Review Element" in the browser's page code. The DOM is the basis of the dynamically created page.

If your content is visible in the DOM, your content is most likely parsed by Google. Checking the DOM will help you determine if your pages are being accessed by search engine bots.

Avoid Blocking Search Engines from Accessing JS Content

To avoid the problem of Google not being able to find the JavaScript content, a few webmasters use a process called "Obfuscation" that presents the JS content to users but hides it from browsers. However, this method is considered a violation of Google's Webmaster Guidelines and you may be penalized for it. Instead, try to identify key issues and make JS content accessible to search engines.

Use Relevant HTTP Status Codes

Google's crawlers to identify problems when crawling a page HTTP status codes uses. Therefore, you should use a meaningful status code to notify bots that a page should not be crawled or indexed. For example, you could use HTTP status 301 to tell bots that a page has moved to a new URL and let Google update its index accordingly.

Fix Duplicate Content

When JavaScript is used for websites, there can be different URLs for the same content. When you find such pages, make sure you choose the original/preferred URL you want indexed and to avoid confusing search engines canonical tags Make sure you set it.

Fix Late Uploaded Content and Images

Site speed is very important for SEO. Late loading is one such user experience best practice that delays the loading of non-critical or non-visible content, thereby reducing the first page load time. But in addition to making pages load faster, you need to make your content accessible to search engine crawlers. These browsers won't run your JavaScript, negatively impacting your SEO.

What's more, image searches are also an additional source of organic traffic. So if you have images that load late, search engines won't select them.

JavaScript Delay and Asynchronous

JavaScript are placed between the codes and the codes are run from top to bottom. If your JavaScript script has a lot of code, it will take longer for your website to load. But by deferring some trivial steps, you can prevent JS from parsing them and increase your site speed.

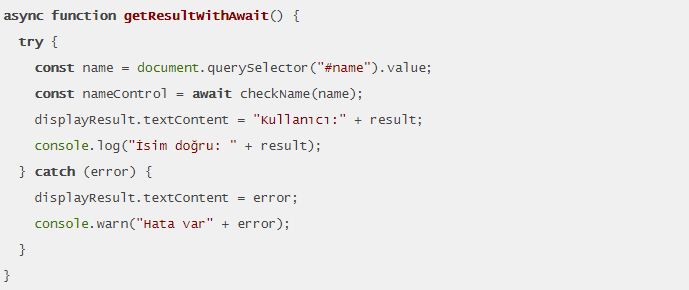

async/await command: async / await commands are available in all programming languages. The async command gives a function or method the ability to operate asynchronously, that is, independent of the home directory stream. Since async functions do not follow the code sequence, they can run parallel to the stream. At this stage, you can wait the method with the await command. Async and await are a newer modern way than threading to write asynchronous code, also called asynchronous.

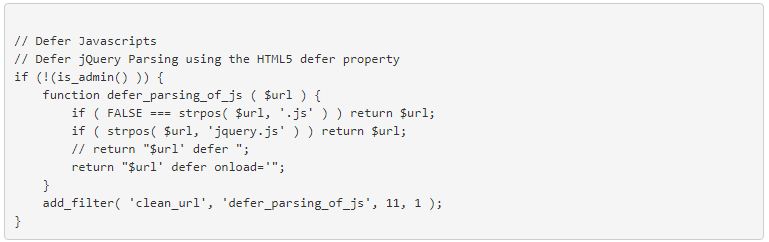

defer Command: The Defer tag opens the JavaScript files last on the page. This command provides an increase in the working speed of the page.

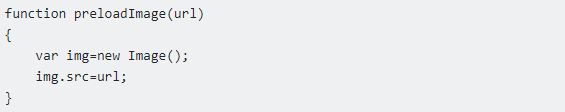

preload command: With the preload command, you can ensure that the images you upload to your site are displayed later than other content. This is also a way to increase site speed.

Mistakes to Avoid in SEO for JavaScript

If you're using JavaScript on your website, Google can now render elements pretty well after the load event and finally read and index the snapshot like a traditional HTML site.

Most problems with JavaScript and SEO are due to incorrect implementation. Therefore, many common SEO best practices can also be used for JavaScript SEO. These are a few of the most common mistakes that can occur:

1. Indexable URLs: Every website requires unique and distinctive URLs for sites to be fully indexed. However, a pushState does not create a URL as it is created with JavaScript. Therefore, your JavaScript site also requires its own web document, which can return a 200 OK status code as a server response to a client or bot query. Every product served with JS (or every category of your website made with JS) must therefore be equipped with a server-side URL in order for your site to be indexed.

2. PushState Errors: JavaScript URLs can be changed with the PushState method. Therefore, you must make absolutely sure that the original URL is transferred with server-side support. Otherwise, you run the risk of duplicate content.

3. Missing Metadata: With the use of JavaScript, many webmasters or SEOs forget the basics and do not pass metadata to the bot. However, the same SEO standards apply to JavaScript content as for HTML sites. Therefore, be sure to use the title and meta description of the alt tags for images.

4. href and img: Googlebot needs links to follow so it can find more sites. So you should also provide links with href or src attributes in your JS documents.

5. Create Unified Versions: The preDOM and postDOM versions are created by the creation of JavaScript. If possible, ensure that no conflicts are entered and that, for example, canonical tags or paginations can be interpreted correctly. This way you avoid hiding.

6. Create Access For All Bots: Not all bots can handle JavaScript like Googlebot. That's why it's recommended to embed titles, meta information, and social tags in the HTML code.

7. Do Not Disable JS Via robots.txt: Make sure your JavaScript can also be crawled by Googlebot. For this, directories should not be excluded in the robots.txt file.

8. Use a Valid Sitemap: You should always keep the "lastmod" attribute updated in your XML sitemap to show Google possible changes in JavaScript content.